BridgeSim: Unveiling the OL-CL Gap in End-to-End Autonomous Driving

BridgeSim: Unveiling the OL-CL Gap in End-to-End Autonomous Driving

Seth Z. Zhao* 1 , Luobin Wang* 2 , Hongwei Ruan 2 , Yuxin Bao 1 , Yilan Chen 2 , Ziyang Leng 1 , Abhijit Ravichandran 2 , Honglin He 1 , Zewei Zhou 1 , Xu Han 1 , Abhishek Peri 3 , Zhiyu Huang 1 , Pranav Desai 3 , Henrik Christensen 2 , Jiaqi Ma 1 , Bolei Zhou 1

1 UCLA , 2 UCSD , 3 Qualcomm

Abstract

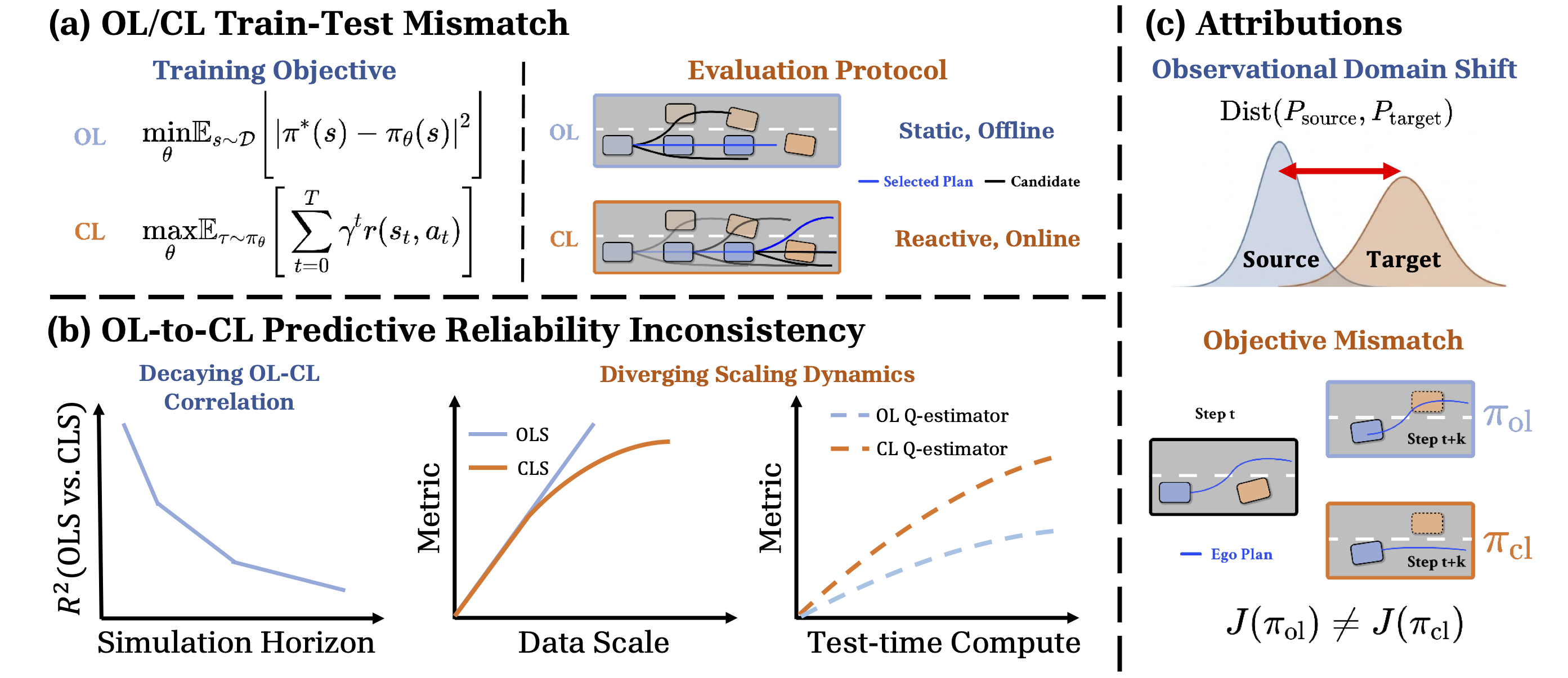

Open-loop (OL) to closed-loop (CL) gap (OL-CL gap) exists when OL-pretrained policies scoring high in OL evaluations fail to transfer effectively in closed-loop deployment. In this paper, we unveil the root causes of this systemic failure and propose a practical remedy. Specifically, we demonstrate that OL policies suffer from Observational Domain Shift and Objective Mismatch. We show that while the former is largely recoverable with adaptation techniques, the latter creates a structural inability to model complex reactive behaviors, which forms the primary OL-CL gap. We find that a wide range of OL policies learn a biased Q-value estimator that neglects both the reactive nature of CL simulations and the temporal awareness needed to reduce compounding errors. To this end, we propose a Test-Time Adaptation (TTA) framework that calibrates observational shift, reduces state-action biases, and enforces temporal consistency. Extensive experiments show that TTA effectively mitigates planning biases and yields superior scaling dynamics than its baseline counterparts. Furthermore, our analysis highlights the existence of blind spots in standard OL evaluation protocols that fail to capture the realities of closed-loop deployment.

Decomposing OL-CL Gap

We define E2E policy as:

The root causes of the OL-CL deployment gap are decomposed into two key factors:

- Observational Domain Shift occurs when there exists an observational domain shift and the source policy receives collapsed partially observable states in the target simulator.

- Objective Mismatch occurs when OL policies, which is optimized against OL proxy reward during the training time, encounters the CL objective that the learned Q-function gives deviated estimates of true state-action values.

Test-time Adaptation (TTA) Framework

We propose a test-time adaptation (TTA) framework to improve closed-loop robustness of pretrained E2E policies without retraining, consisting of an Observational Calibrator and a Test-time Policy Adaptation procedure.

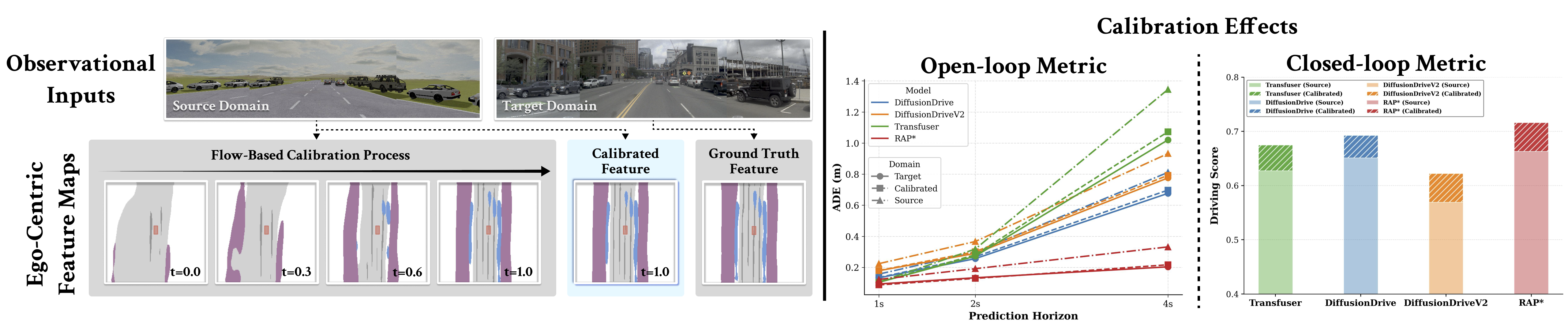

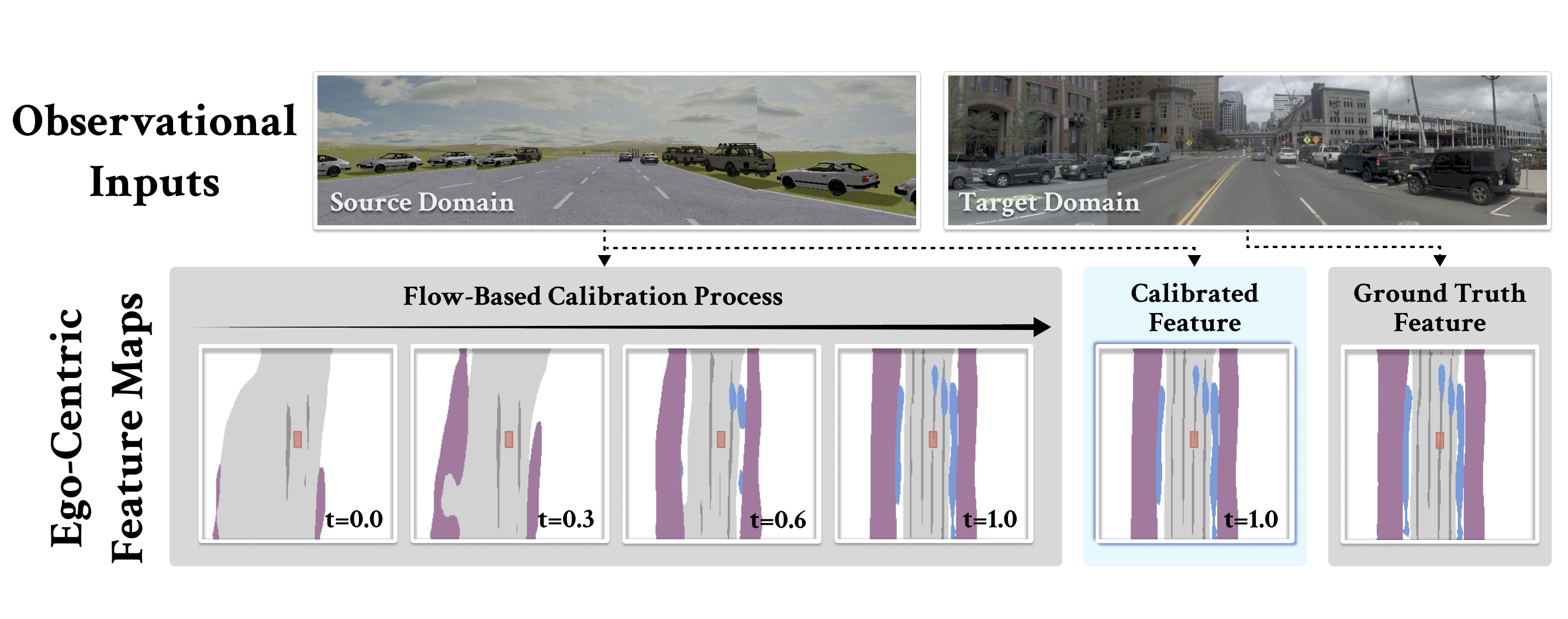

Observational Calibrator

Source and target domains induce different latent distributions due to domain-dependent sensing, causing the pretrained policy to receive out-of-distribution representations. We use flow matching to learn a transport map that aligns the source latent distribution to the target, mapping source observations into representations compatible with the downstream policy and effectively recovering open-loop performance.

Test-time Policy Adaptation

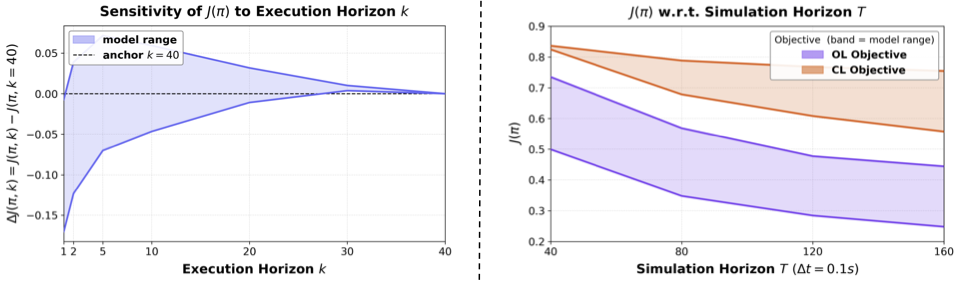

Truncated Q-value Estimation

Standard Q-value estimation accumulates rewards infinitely, making it intractable under an open-loop model beyond the planning horizon $H$. We introduce a truncated action-value estimator that explicitly cancels the infinite tail:

At each step, the agent selects the candidate trajectory maximizing $\hat{Q}^{\pi}$, dynamically filtering out plans suffering from biased open-loop estimation.

Adaptive Replan

Standard test-time scorers operate memorylessly, selecting from the current candidate set at every step and causing action chattering. Instead, we carry forward the unexecuted remainder of the previous plan and retain it unless a new candidate yields a strictly higher estimated return, promoting temporal consistency and reducing unnecessary replanning across consecutive decision steps.

BridgeSim Simulator

BridgeSim is a unified cross-simulator platform designed to evaluate OL pretrained E2E driving policies within high-fidelity CL environments in the MetaDrive simulator. BridgeSim designs a Furthermore, BridgeSim offers a flexible deployment setting to simulate open-loop policy with varying execution frequencies and simulation horizons, providing the functional depth necessary for systematic and rigorous diagnosis of OL-CL gap. Consequently, BridgeSim bridges the critical divide between open-loop benchmarks limited to short-term static prediction and existing closed-loop frameworks that often lack the comprehensive functionality and annotations required for complex, reactive stress-testing.

![]() Unified structural framework on low-level control for policy transfer to maintain state-action compatibility across domains

Unified structural framework on low-level control for policy transfer to maintain state-action compatibility across domains

![]() Unified scenario protocol to incorporate diverse map scenarios (e.g., nuPlan, WOMD, and nuScenes) with heterogeneous traffic modes (e.g., log-replay, IDM and adversarial policies) to stress-test E2E driving policies under closed-loop simulation environment.

Unified scenario protocol to incorporate diverse map scenarios (e.g., nuPlan, WOMD, and nuScenes) with heterogeneous traffic modes (e.g., log-replay, IDM and adversarial policies) to stress-test E2E driving policies under closed-loop simulation environment.

![]() Unified open-loop and closed-loop simulation modes and metrics for comprehensive evaluations.

Unified open-loop and closed-loop simulation modes and metrics for comprehensive evaluations.

Citation

@article{zhao2026bridgesim,

title={BridgeSim: Unveiling the OL-CL Gap in End-to-End Autonomous Driving},

author={Zhao, Seth Z and Wang, Luobin and Ruan, Hongwei and Bao, Yuxin and Chen, Yilan and Leng, Ziyang and Ravichandran, Abhijit and He, Honglin and Zhou, Zewei and Han, Xu and others},

journal={arXiv preprint arXiv:2604.10856},

year={2026}

}